Enterprise-class co-designed software and hardware solutions

from HPE and NVIDIA accelerate development and deployment of GenAI

applications

Today at NVIDIA GTC, Hewlett Packard Enterprise (NYSE: HPE)

announced updates to one of the industry’s most comprehensive

AI-native portfolios to advance the operationalization of

generative AI (GenAI), deep learning, and machine learning (ML)

applications. The updates include:

- Availability of two HPE and NVIDIA co-engineered full-stack

GenAI solutions.

- A preview of HPE Machine Learning Inference Software.

- An enterprise retrieval-augmented generation (RAG) reference

architecture.

- Support to develop future products based on the new NVIDIA

Blackwell platform.

“To deliver on the promise of GenAI and effectively address the

full AI lifecycle, solutions must be hybrid by design,” said

Antonio Neri, president and CEO at HPE. “From training and tuning

models on-premises, in a colocation facility or the public cloud,

to inferencing at the edge, AI is a hybrid cloud workload. HPE and

NVIDIA have a long history of collaborative innovation, and we will

continue to deliver co-designed AI software and hardware solutions

that help our customers accelerate the development and deployment

of GenAI from concept into production.”

“Generative AI can turn data from connected devices, data

centers and clouds into insights that can drive breakthroughs

across industries," said Jensen Huang, founder and CEO at NVIDIA.

"Our growing collaboration with HPE will enable enterprises to

deliver unprecedented productivity by leveraging their data to

develop and deploy new AI applications to transform their

businesses.”

Supercomputing-powered GenAI training and tuning

Announced at SC23, HPE’s supercomputing solution for generative

AI is now available to order for organizations seeking a

preconfigured and pretested full-stack solution for the development

and training of large AI models. Purpose-built to help customers

accelerate GenAI and deep learning projects, the turnkey solution

is powered by NVIDIA and can support up to 168 NVIDIA GH200 Grace

Hopper Superchips.

The solution enables large enterprises, research institutions,

and government entities to streamline the model development process

with an AI/ML software stack that helps customers accelerate GenAI

and deep learning projects, including LLMs, recommender systems,

and vector databases. Delivered with services for installation and

set-up, this turnkey solution is designed for use in AI research

centers and large enterprises to realize improved time-to-value and

speed up training by 2-3X. For more information or to order it

today, visit HPE's supercomputing solution for generative AI.

Enterprise-class GenAI tuning and inference

Previewed at Discover Barcelona 2023, HPE’s enterprise computing

solution for generative AI is now available to customers directly

or through HPE GreenLake with a flexible and scalable pay-per-use

model. Co-engineered with NVIDIA, the pre-configured fine-tuning

and inference solution is designed to reduce ramp-up time and costs

by offering the right compute, storage, software, networking, and

consulting services that organizations need to produce GenAI

applications. The AI-native full-stack solution gives businesses

the speed, scale and control necessary to tailor foundational

models using private data and deploy GenAI applications within a

hybrid cloud model.

Featuring a high-performance AI compute cluster and software

from HPE and NVIDIA, the solution is ideal for lightweight

fine-tuning of models, RAG, and scale-out inference. The

fine-tuning time for a 70 billion parameter Llama 2 model running

this solution decreases linearly with node count, taking six

minutes on a 16-node system1. The speed and performance enable

customers to realize faster time-to-value by improving business

productivity with AI applications like virtual assistants,

intelligent chatbots, and enterprise search.

Powered by HPE ProLiant DL380a Gen11 servers, the solution is

pre-configured with NVIDIA GPUs, the NVIDIA Spectrum-X Ethernet

networking platform, and NVIDIA BlueField-3 DPUs. The solution is

enhanced by HPE’s machine learning platform and analytics software,

NVIDIA AI Enterprise 5.0 software with new NVIDIA NIM microservice

for optimized inference of generative AI models, as well as NVIDIA

NeMo Retriever and other data science and AI libraries.

To address the AI skills gap, HPE Services experts will help

enterprises design, deploy, and manage the solution, which includes

applying appropriate model tuning techniques. For more information

or to order it today, visit HPE’s enterprise computing solution for

generative AI.

From prototype to productivity

HPE and NVIDIA are collaborating on software solutions that will

help enterprises take the next step by turning AI and ML

proofs-of-concept into production applications. Available to HPE

customers as a technology preview, HPE Machine Learning Inference

Software will allow enterprises to rapidly and securely deploy ML

models at scale. The new offering will integrate with NVIDIA NIM to

deliver NVIDIA-optimized foundation models using pre-built

containers.

To assist enterprises that need to rapidly build and deploy

GenAI applications that feature private data, HPE developed a

reference architecture for enterprise RAG, available today, that is

based on the NVIDIA NeMo Retriever microservice architecture. The

offering consists of a comprehensive data foundation from HPE

Ezmeral Data Fabric Software and HPE GreenLake for File Storage.

The new reference architecture will offer businesses a blueprint to

create customized chatbots, generators, or copilots.

To aid in data preparation, AI training, and inferencing, the

solution merges the full spectrum of open-source tools and

solutions from HPE Ezmeral Unified Analytics Software and HPE’s AI

software, which includes HPE Machine Learning Data Management

Software, HPE Machine Learning Development Environment Software,

and the new HPE Machine Learning Inference Software. HPE’s AI

software is available on both HPE’s supercomputing and enterprise

computing solutions for generative AI to provide a consistent

environment for customers to manage their GenAI workloads.

Next-gen solutions built on NVIDIA Blackwell platform

HPE will develop future products based on the newly announced

NVIDIA Blackwell platform, which incorporates a second-generation

Transformer Engine to accelerate GenAI workloads. Additional

details and availability for forthcoming HPE products featuring the

NVIDIA GB200 Grace Blackwell Superchip, the HGX B200, and the

HGXB100 will be announced in the future.

About Hewlett Packard Enterprise

Hewlett Packard Enterprise (NYSE: HPE) is the global

edge-to-cloud company that helps organizations accelerate outcomes

by unlocking value from all of their data, everywhere. Built on

decades of reimagining the future and innovating to advance the way

people live and work, HPE delivers unique, open, and intelligent

technology solutions as a service. With offerings spanning Cloud

Services, Compute, High Performance Computing & AI, Intelligent

Edge, Software, and Storage, HPE provides a consistent experience

across all clouds and edges, helping customers develop new business

models, engage in new ways, and increase operational performance.

For more information, visit: www.hpe.com

1 Based on initial internal benchmarks of llama-recipes

finetuning.py that tracked the average epoch time to fine-tune

eight nodes at 594 seconds and 16 nodes at 369 seconds with flash

attention and parameter efficient fine-tuning.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20240318761801/en/

Media: Cristina Thai cristina.thai@hpe.com

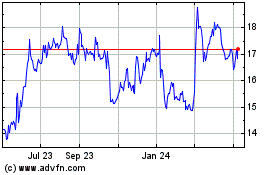

Hewlett Packard Enterprise (NYSE:HPE)

Historical Stock Chart

From May 2024 to Jun 2024

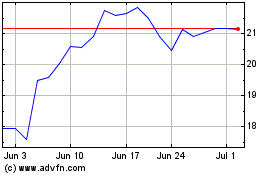

Hewlett Packard Enterprise (NYSE:HPE)

Historical Stock Chart

From Jun 2023 to Jun 2024