By Emily Glazer and Sam Schechner

The 2020 election spurred social-media giants to adopt

aggressive changes to how they police political discourse. Now the

questions are whether that new approach will last and whether it

should.

During the course of the contentious U.S. presidential campaign,

and its messy denouement, Facebook Inc. and Twitter Inc. have taken

steps that would have been unthinkable four years ago. They have

applied fact-checking labels to posts from the U.S. president,

deleted entire online communities and hobbled some functions of

their own platforms to slow the spread of what they deemed false or

dangerous content.

The companies -- in particular Twitter -- this month have been

strict with warning labels on claims of voter fraud from President

Trump and some of his supporters while Americans spent days

awaiting vote-counting that was delayed by an unprecedented number

of mail-in ballots.

For some lawmakers, mostly Democrats, the shift was overdue,

while many Republicans accuse the tech firms of enforcing the rules

inconsistently and allege the Silicon Valley giants are biased

against conservatives.

Sen. Josh Hawley (R, Mo.), a Trump supporter who has been

critical of social media, has tweeted about the moves and referred

to them as the "#BigTech crackdown."

The companies have maintained they are committed to making sure

users get reliable information about the election and not allowing

falsehoods to spread. Taking on that role puts them in the

uncomfortable position of having to make decisions about what they

believe is true and what isn't.

The aggressiveness marks a shift for the social-media industry,

which was paralyzed for years about whether to intervene on

political speech, says Jenna Golden, former head of political and

advocacy sales at Twitter.

"There was a bit of feeling frozen in what do we do, how do we

do it and how do we do it fairly," said Ms. Golden, who now runs

her own consulting firm. "I saw the thinking happening, but no one

was ready to make any decisions."

A key turning point came in May, when Twitter for the first time

placed a fact-checking advisory on one of Mr. Trump's tweets. By

the time of the election, such notices were almost commonplace. On

Saturday, the day media outlets called the race for

now-President-elect Joe Biden, Mr. Trump tweeted six times --

Twitter labeled half of them as being disputed or misleading.

Mr. Trump has said Twitter is "out of control" and is censoring

his views on the election.

Facebook also labeled Mr. Trump's posts, though it didn't hide

them as did Twitter, which required users to click through labels

to see the content. Facebook took other steps to intervene in how

content spread, including dismantling a fast-growing group called

"Stop the Steal," created by a pro-Trump organizations that were

organizing protests of vote counts around the country. Facebook

said it made the move because it "saw worrying calls for violence

from some members of the group." The group's organizers said

Facebook was selectively enforcing its rules to silence them.

The company later tightened its grip on speech across its

platforms, including its Instagram photo-sharing app, invoking some

of the emergency tools that executives previously described as

their "break-glass" options to respond to possible postelection

unrest.

Facebook spokesman Andy Stone said it has spent years preparing

for safer, more secure elections. "There has never been a plan to

make these temporary measures permanent and they will be rolled

back just as they were rolled out -- with careful execution," he

said, adding that temporary election protections were also used

during the 2018 midterms and in other global elections.

While the severity of their measures varied, all the major

social-media platforms took steps to label false election

information or limit the spread of content they deemed dangerous.

The popular short-video app TikTok, owned by ByteDance Ltd., banned

all searches for "election fraud" last week.

YouTube says it has put an emphasis on elevating video-search

results from authoritative sources and is also limiting

recommendations to videos advancing baseless claims of voter fraud

or premature calls of victory. A search Saturday on YouTube for an

unfounded allegation that Democrats had used U.S. hacking software

to alter election results -- something that Chris Krebs, director

of the U.S. Cybersecurity and Infrastructure Security Agency

described as "nonsense" -- returned several videos advancing the

claim. By Sunday, results for that search had a banner saying, "The

AP has called the Presidential race for Joe Biden."

A YouTube spokeswoman said it can take time for the company's

system to trigger such banners. "We are exploring options to bring

in external researchers to study our systems and learn more about

our approach and we will continue to invest in more teams and new

features," she added.

It is too early to quantify the effect of most of these moves,

and researchers say the impact may never be fully known because not

all of the interventions are disclosed. Still some of the efforts

had noticeable results.

Take a tweet Mr. Trump sent on the evening before Election Day,

contending a Supreme Court decision on voting in Pennsylvania would

allow "unchecked cheating." In the nearly 37 minutes the tweet was

available before Twitter labeled it as disputed and potentially

misleading, it got 31,359 replies, retweets, quote tweets or

retweets of quote tweets, according to Joe Bak-Coleman, a

postdoctoral fellow at the University of Washington Center for an

Informed Public. In the following 37 minutes, it brought in fewer

than 5,700 -- a decline of 82%.

A separate analysis from social-media analytics firm Storyful

found that Facebook posts by President Trump, his campaign and

conservative outlets remained among the most viral on the platform

in the days following the election. Storyful is owned by News Corp,

which also owns Wall Street Journal publisher Dow Jones &

Co.

The view that the rules are selectively enforced has led many

conservatives to abandon Facebook and Twitter for other platforms,

such as Parler. The app, which calls itself a "free speech social

network," surged to the top of the download rankings for free apps

this week.

In part because of that criticism, the larger platforms now face

the challenge of articulating coherent enforcement strategies that

can be applied consistently, including in other countries where the

companies typically have fewer resources, say researchers who study

social media and misinformation.

"Once a company makes a move once, it is much easier for them to

do it again," said Graham Brookie, director of the Atlantic

Council's DFRLab, which studies political misinformation. "We

certainly crossed that Rubicon and aren't going back across."

Facebook has scheduled some postelection analysis sessions a few

weeks after the election where employees are expected to discuss

which measures that the company took in recent weeks should last

longer, a person familiar with the matter said. Substantial changes

would require Facebook CEO Mark Zuckerberg's approval, the person

added.

One change that appears likely: Twitter said Mr. Trump's

account, which has grown to more than 88 million followers, would

no longer receive special privileges once he becomes a private

citizen. The loss of those privileges, which are reserved for world

leaders and public officials, would mean that some tweets that

violate the site's rules would be taken down rather than

labeled.

--Deepa Seetharaman contributed to this article.

Write to Emily Glazer at emily.glazer@wsj.com and Sam Schechner

at sam.schechner@wsj.com

(END) Dow Jones Newswires

November 11, 2020 11:33 ET (16:33 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

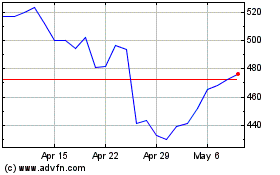

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From Apr 2024 to May 2024

Meta Platforms (NASDAQ:META)

Historical Stock Chart

From May 2023 to May 2024